A Note on Enterprise Adoption

The Synergist problem at scale

Most enterprise AI strategies look convincing on paper. Far fewer translate into meaningful, sustained results.

In 2025, research from MIT’s NANDA initiative, drawing on executive interviews, employee surveys, and analysis of hundreds of AI deployments, reported that a significant proportion of enterprise generative AI pilots were failing to deliver measurable financial returns within their initial deployment window. The figure attracted attention, and scrutiny, in equal measure. Its definition of success was narrow, focused on short-term P&L impact rather than longer-term capability building, productivity gains, or compounding advantage over time.

At the same time, other perspectives have pointed in a different direction, with many organisations reporting positive outcomes from AI adoption when measured more broadly. The truth sits between these positions. Enterprise AI is neither failing nor succeeding uniformly. It is producing highly uneven results, and the variance between organisations, and even between teams within the same organisation, is often extreme.

This is not a story about technology failing. The models are more than capable. It is a story about the conditions in which Human-AI Partnership either flourishes or stalls, and about what those conditions require from the people responsible for creating them.

Why large organisations struggle

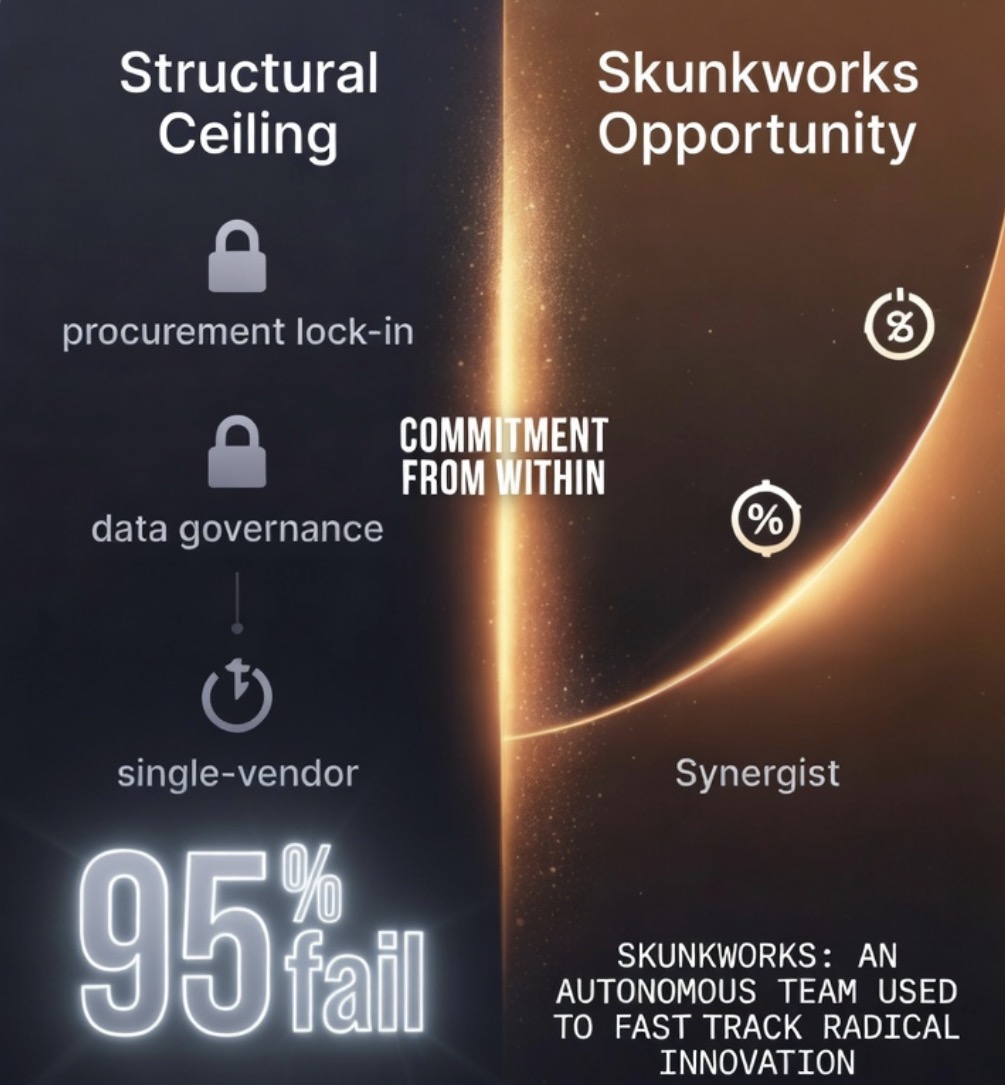

Large organisations operate within structural constraints that make genuine Human-AI Partnership difficult to achieve at scale, even when intent is strong and investment is significant.

Procurement lock-in is one of the most visible. Organisations standardise on a single approved vendor, sign enterprise agreements, and manage AI as a software category rather than as a capability. The compounding returns from genuine partnership come from fluency across approaches and contexts, from knowing when to use one system and when to use another. A single approved vendor often becomes a constraint dressed as a solution.

Data governance architecture introduces a second limitation. Necessary protections around privacy, compliance, and risk create boundaries on what can be shared with external systems. These boundaries are rational, but they can also restrict the depth of context that allows AI to become genuinely useful. The gap between what the system is permitted to know and what a skilled practitioner needs it to know can be large enough to materially reduce value.

The political reality compounds the structural one. In large organisations, the individuals with the deepest curiosity about Human-AI Partnership are rarely the ones with the authority to change policy, and those with the authority are rarely operating at the level of personal practice required to see its full value.

Top-down AI programmes produce usage. They do not produce Synergists.

Mandated adoption, measured through dashboards and seat counts, tends to create compliance rather than transformation.

A further constraint is emerging more quietly but no less significantly; cognitive overload. As AI becomes more present in daily workflows, many individuals report a form of fatigue that does not come from lack of capability, but from the constant requirement to engage, evaluate, and respond. Without structure, AI can increase the volume of thinking without improving its quality. This is the early form of what is increasingly described as AI burnout; not exhaustion from work alone, but from unmanaged interaction with systems that amplify noise as easily as they amplify clarity.

The shadow AI phenomenon reflects the same dynamic from another angle. In many organisations, employees continue to use personal AI tools even where official programmes are limited or stalled. The practitioners are still there. They are simply operating at the edges, finding ways to do the work effectively despite the system rather than because of it.

Where the opportunity lives

This is where the narrative shifts.

The structural constraints of large organisations are real, but they are not absolute. Enterprise adoption does not require universal transformation to produce meaningful results. It requires pockets of excellence; individuals and teams operating at a higher level of practice, often within the same organisation that struggles to implement change at scale.

You do not need the entire organisation to move. You need a committed group, the right mandate, and a willingness to engage at the level of personal practice that the Method requires.

The structural vehicle for this is well understood, but rarely executed with seriousness; the internal start-up, or skunkworks unit. A group with sufficient autonomy to operate differently, an explicit mandate to move at a different pace, and leadership that is actively practising the Method rather than simply overseeing its implementation.

Teams that reach Synergist level tend to share a common pattern. They are led by individuals who treat AI partnership as a personal discipline, not a delegated initiative. They define success in terms of outcomes rather than activity. And they protect the work long enough for it to produce results that are difficult for the wider organisation to ignore.

The ceiling is rarely set by the organisation alone. It is set by the willingness of individuals within it to build something different, often before the system around them is ready for it.

The Veritas Method® does not require permission from above. It requires commitment from within.

The organisations that succeed will not be those that adopt fastest. They will be the ones that practise deepest.